発行済み :2022/12/06 6:58:58

クリック数:2103

At the recent Re:Invent conference, Amazon announced the launch of new EC2 instances for its AWS cloud services, namely C7gn, HPC7g and R7iz. R7iz uses Intel's latest fourth-generation Xeon Scalable processor Sapphire Rapids, while HPC7g is driven by the new processor Graviton3E. At the same time, AWS also announced the fifth-generation Nitro chip Nitro v5 and the second-generation reasoning chip AWS Inferentia 2 at this conference, of which Graviton3E and Nitro v5 are based on the Arm architecture.

Graviton3E with improved performance

In the field of HPC, the appearance rate of Arm is still relatively low. Except for top-level existences like Fuyue Supercomputing, we rarely see cloud service vendors building HPC computing clusters based on Arm chips. The emergence of Graviton can be said to have changed this. The C6gn instance launched by AWS in 2020 is based on its Graviton2 processor. Compared with the C5 instance based on the second-generation AMD EPYC processor, C6gn achieves 20% lower cost and 40% higher performance.

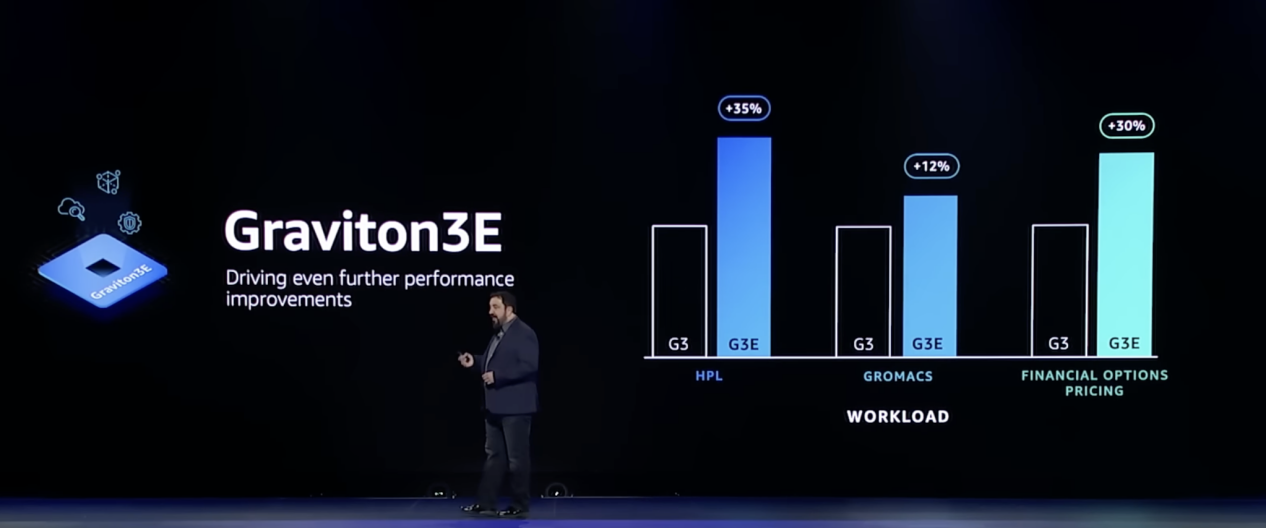

Performance improvement of Graviton3E under different loads / Amazon

According to the data given by Amazon, Graviton3E is further improved on the basis of Graviton3. Taking the benchmark HPL for testing floating-point performance as an example, Graviton3E improves the vector instruction processing performance by another 35%. Compared with the C6gn instance based on Graviton2, the HPC7g instance doubles the floating-point performance, and the performance is 20% higher than the HPC6a instance based on the 3rd generation AMD EPYC 7003 series 48-core processor. It is the most cost-effective HPC instance on AWS.

Today, Amazon has more than 100 different Graviton instances for users to choose flexibly. Moreover, it is not only elastic computing cloud services such as AWS EC2 that make Graviton fully effective, but also services such as Fargate that do not need to manage instances, which have also achieved considerable performance improvements.

However, for most cloud service vendors, many of the instances they first recommend are still based on x86, which makes people think deeply. Will there really be large companies that choose Arm server chips? Amazon gave an affirmative answer and also introduced Graviton's extremely fast popularity.

From the partners given by Amazon, we can see that Daewoo Infinity, Epic, Lyft, Zoom and other companies have chosen to build their products and services around the Graviton chip. For example, after the US TV streaming service DirectTV uses Graviton 3 instances, the cost has been reduced by 20%, and the delay has also been reduced by up to 50%. Not affected.

Nitro and Inferentia that exacerbate the involution of DPU and AI chips

For most ultra-large-scale data centers and cloud service vendors, their DPUs often come from third parties, such as Nvidia's BlueField, AMD's Pensando, etc., while Amazon's Annapurna Labs has become the behind-the-scenes hero of AWS DPU products Nitro.

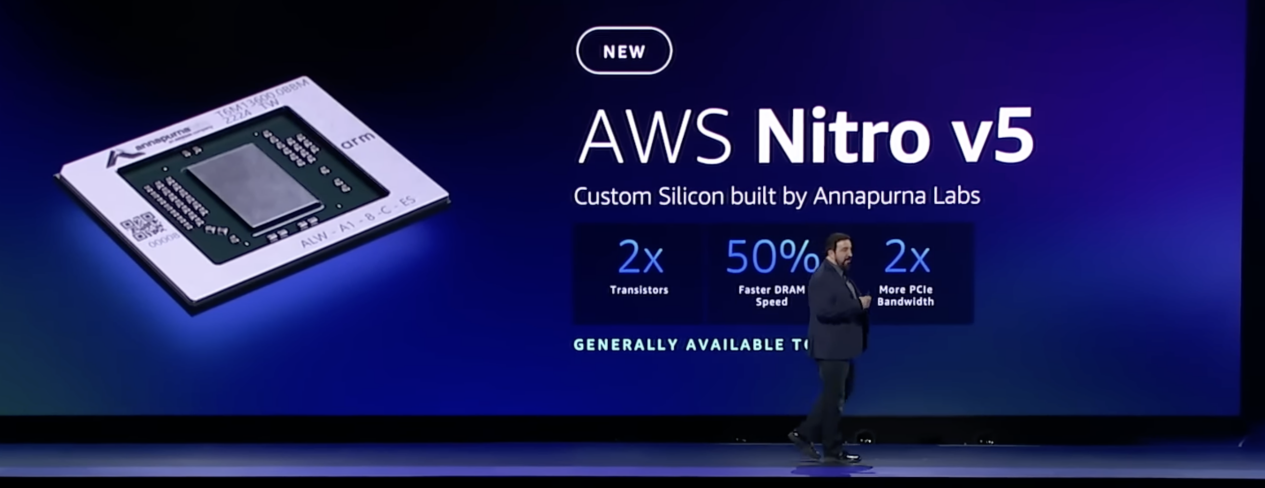

Nitro v5 chip / Amazon

This time, the Nitro v5 designed by Annapurna Labs has achieved another leap in performance. According to the data shown by Amazon, Nitro v5 integrates twice the number of transistors compared with the previous generation, and nearly doubles the computing power. 50% of the DRAM speed, the PCIe bandwidth is also doubled. It can be seen from this that Nitro v5 should have chosen a more advanced process, and the DRAM and PCIe have also been replaced with the latest generation.

In actual tests, Nitro v5 can increase throughput by up to 60%, reduce latency by 30%, and improve energy efficiency by 40%. It is precisely because of such powerful performance that AWS chose to integrate it into C7gn and HPC7g instances to achieve 200Gbps ultra-high network performance together with Graviton3 and Graviton3E.

The emergence of large language models has pushed deep learning into the next stage, but the huge amount of parameters increases the computing power and cost required for inference. In 2019, the first generation of AWS's Inferentia chips appeared on Inf1 instances, providing users with options that are more cost-effective than GPU instances. However, most of the deep learning models at that time remained in the millions. Now some deep learning models The parameters of the model have exceeded tens of billions, such as Baidu's PLATO-XL dialogue generation model, Amazon's AlexaTM, etc.

To this end, Annapurna Labs has come up with a brand new Inferentia2 chip, which can support a large deep learning model with up to 175 billion parameters. The Inf2 instance based on the Inferentia2 chip also supports distributed reasoning for the first time, distributing large models to multiple chips for reasoning. Compared with the previous generation of Inf1 instances, Inf2 can provide up to 4 times the throughput and 1/10 the latency, and improves energy efficiency by as much as 50% compared with GPU instances.

Summary

Such a frequent chip release rhythm is enough to see that Amazon has reached a new height in self-developed server chips. I have to admit that it was a forward-looking strategic decision for Amazon to acquire Annapurna Labs as early as 2016. With the intensified competition among cloud service providers, owning self-developed and controllable server chips is undoubtedly the killer. Although manufacturers such as Google and Alibaba have also joined the ranks of self-developed server chips, compared with Amazon's AWS, there is still a gap in product lineup and deployment time.